Jensen Huang on Nvidia's future

Dwarkesh Patel sits down with Jensen Huang — and what follows is one of the most intense, occasionally combative, and genuinely illuminating conversations I've heard in a while. Jensen was not in a mood to entertain bad analogies. More on that later.

There is a line Jensen drops early in the conversation that I keep thinking about:

Nvidia's job is to transform electrons into tokens.

That's it. That's the whole company, distilled. Electrons go in. Tokens — the currency of AI — come out. Everything else is machinery in service of that idea.

Before we go further, Jensen lays out what he calls the five layers of AI, like a cake. And like any good cake, every layer matters:

- Energy — the foundation. Nothing runs without it.

- Chips — the hardware doing the actual work.

- Infrastructure — data centres, networking, the plumbing.

- Models — the AI itself.

- Applications — what you and I actually use.

Keep this in mind. Jensen refers back to it constantly, and so will I.

The Supply Chain Nobody Talks About

No surprises here.. Nvidia has locked in approximately $250 billion worth of purchase commitments — with foundries, memory suppliers, and packaging partners — before demand has even fully materialised. This is not reactive. This is a company pre-empting the queue years in advance.

Take CoWoS (Chip on Wafer on Substrate) — TSMC's advanced packaging technology. A year or two ago, it was the bottleneck everyone was panicking about. Today? Nobody's talking about it. Because it doubled. Supply caught up. Similarly, HBM — the high-bandwidth memory that feeds GPUs — has essentially gone from exotic to mainstream computing infrastructure.

But Jensen is not resting. Bottlenecks are being anticipated and addressed years before they become crises. Silicon photonics. Lumentum. Coherent. These are not household names, but Nvidia is already in partnership with TSMC on something called COUPE (Compact Universal Photonic Engine), even licensing patents down the supply chain to ensure nobody gets stuck.

The one thing Jensen says he genuinely worries about is not capacity. It is energy policy. Capacity bottlenecks are 2–3 year problems. Energy infrastructure is a decade-long problem. When the world's most valuable chip company says it is not worried about manufacturing but is worried about electricity — that is worth sitting with.

The GTC Effect

How does a chip company convince the world's biggest companies to spend billions on hardware they don't fully understand yet?

Jensen personally briefs CEOs. He brings them to GTC — Nvidia's annual AI conference — where the entire supply chain, from upstream suppliers to downstream application builders, assembles in one place. The argument is not made in a slide deck. It is made by showing people the scale of what is already happening and letting them decide whether they want to be inside or outside of it.

Instantaneous demand is greater than total supply. Jensen frames this not as a problem but as a signal. When everyone wants it and nobody can get enough of it, that is not a crisis. That is a very good business.

What Nvidia Actually Is (And Is Not)

This section is where it gets technical — and where Jensen got a bit irritated with the questions. Bear with me.

Most people think of Nvidia as an AI chip company. Jensen insists it is an accelerated computing company. The distinction matters.

A TPU (Tensor Processing Unit) — like what Google uses, and yes, both Claude and Gemini are trained on TPUs — is purpose-built for AI. It is extremely good at matrix multiplication, which is essentially what AI runs on. It wastes no space on things it does not need. It is a specialist.

A GPU is a generalist that became a specialist. It can do molecular dynamics, quantum chromodynamics, fluid simulations, structured data processing, and yes, AI. That breadth is the moat. Because when someone invents a completely new type of model architecture — say, a hybrid SSM, or a fusion of autoregressive and diffusion models — Nvidia can run it. The TPU may not be able to. As an app developer, you will normatively choose what is the most commonly deployed architecture, which is Nvidia.

TPUs improve roughly 25% per year, following Moore's Law. Nvidia, by contrast, can make 10x or 100x leaps through fundamental algorithm changes combined with hardware redesigns. Blackwell to Hopper, for example, is reportedly 50x more energy efficient. That is not incremental. That is a different game.

And then there is CUDA (Compute Unified Device Architecture) — Nvidia's programming environment. Sixty percent (60%) of Nvidia's revenue comes from the big hyperscalers: AWS, Microsoft Azure, Google Cloud, Oracle. But the reason CUDA will remain at the frontier of AI comes down to three things Jensen is quite clear about:

- The richness of the coding environment — engineers have built an enormous amount on top of it.

- The sheer install base — there are more developers writing in CUDA than any comparable system.

- It is embedded into every major cloud. Wherever the cloud goes, CUDA goes.

The Anthropic Question (And Why Jensen Missed It)

Here is a fun footnote.

Dwarkesh asks Jensen directly: if Nvidia is so dominant, why is Anthropic running on Google TPUs? Why is OpenAI reportedly working with AMD?

Jensen's answer, delivered with characteristic bluntness: Google and AWS invested billions into Anthropic. Of course Anthropic uses their chips. That is not a technology decision. That is a capital decision.

And then Jensen admits something rare for a CEO: he missed it. He did not anticipate that a venture capital firm would put $5–10 billion into an AI lab. He thought the capital requirements would be too absurd. He was wrong. And now? Nvidia has invested $30 billion into OpenAI and $10 billion into Anthropic.

He will not make that mistake again.

On Margins, Competitors, and Why Jensen Won't Become a Hyperscaler

Nvidia's gross margin sits around 70%. Broadcom's ASIC (Application-Specific Integrated Circuit) alternative sits around 65%. Jensen's response to the competitive threat is essentially: after all your engineering costs and switching costs, how much are you really saving?

But the more interesting question is why Nvidia doesn't just become a hyperscaler — rent out compute directly, capture even more of the value chain. Jensen's answer reveals something about his philosophy:

Do as much as needed. As little as possible.

Nvidia's job is to make the ecosystem thrive. CoreWeave, Nebius, Nscale — these companies would not exist without Nvidia's support. But Nvidia does not pick winners. It invests in everyone, in all foundation models. The reasoning is partly humble: when Nvidia started, there were 60 3D graphics companies. Only one survived. And Jensen is honest enough to say Nvidia probably would not have been at the top of anyone's list of predicted survivors back then.

On pricing — no auctions, no highest bidder. Nvidia sets a price. You decide to buy or not. Place a purchase order (PO) and Nvidia will do its best to deliver. First in, first out. Jensen tells a story about Larry Ellison and Elon Musk having dinner with him, apparently begging for GPUs. Jensen says no such thing happened. It's all first in first out. Place the PO and you will get your supply.

Sidetrack: placing the PO is eminent info in every part of sales and operations. We can have a ton of discussions, a ton of planning, a ton of events and promotions, but if the PO does not come in - all is for naught. How does one measure ROI or the effort with no visible outcome in revenue later?

Jensen says he prefers to be dependable over being clever. Nvidia and TSMC have worked together for 30 years without a formal legal contract. That tells you something. The conservative person inside me will beg you to have proper contracts, but there's a beauty to having no contracts. Reminds me of the medieval or three kingdom times where a man's word is his honour.

The China Question

This is where the conversation gets genuinely uncomfortable.

Nvidia does not sell its latest chips to China today, due to US export controls. The implication is that cutting China off from advanced AI chips will prevent them from developing competitive AI. Jensen essentially says: that logic is wrong.

China manufactures more than 60% of the world's mainstream chips. Roughly 50% of the world's AI researchers are Chinese. The 7nm Hopper chips — the ones China can access — are, in Jensen's view, plenty good enough for serious AI work. Huawei can manufacture chips (sidenote, Huawei reportedly can do 2 nm chips already). SMIC has sufficient logic and HBM2 memory. DeepSeek — China's frontier model — is optimised for Huawei hardware.

Jensen's view: the idea that China cannot develop capable AI without US chips is complete nonsense.

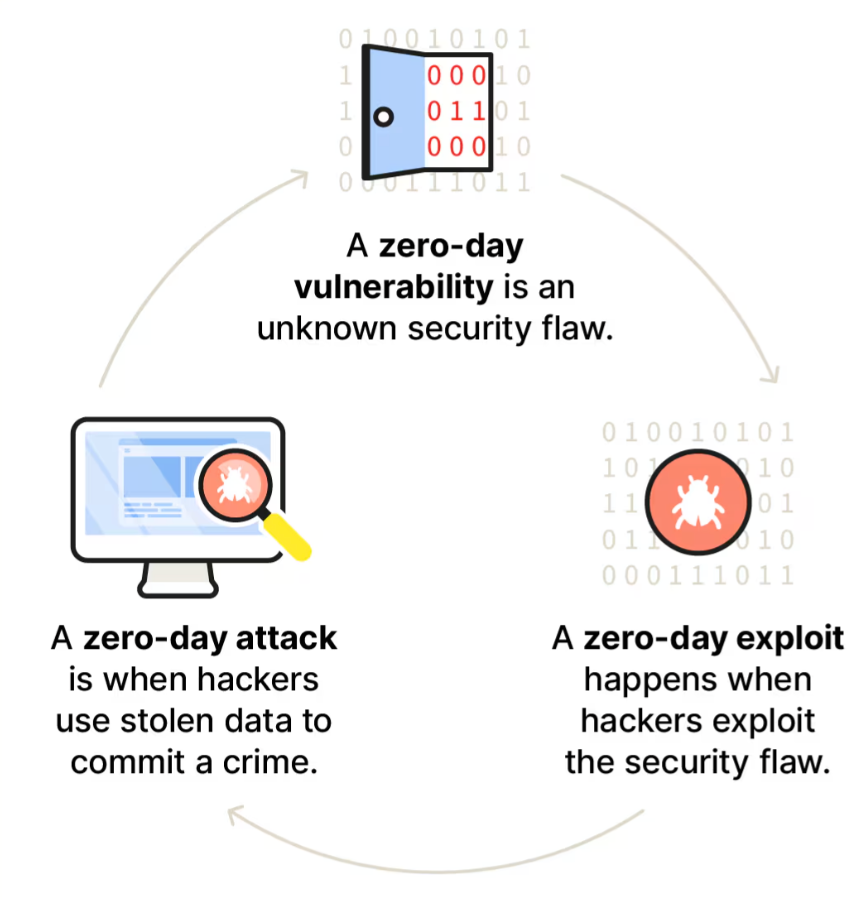

Mythos AI, developed by Claude team, interestingly, was cited as an example of what could Chinese AI capability become if it had access to the latest chips. Mythos reportedly found vulnerabilities in software like OpenBSD that was built to have no vulnerabilities, including a zero-day that had existed for 27 years. The implication is clear: AI capability, in the hands of sophisticated actors, is already a security issue regardless of chip access.

Jensen is fully supportive of the US maintaining technological leadership. Nvidia's next platforms — Vera (CPUs) Rubin (GPUs) — are US technology that China does not have. But he also argues that forcing Nvidia out of China is actually bad for the US. It cedes relationships, revenue, and influence without preventing the outcome it intends to prevent. China will progress regardless of access to the latest chips.

His ask: a proper US–China research dialogue. Not wall-building. Conversation. Open source is critical.

On Jobs, Tasks, and Why AI Won't Kill Radiology

One of Jensen's cleaner distinctions: the job of a radiologist is patient care. The task is to read a scan.

AI will take tasks. Jobs are more complicated.

He pushes back hard on the idea that scaring people about AI is a useful or accurate framing. The most important layer of the five-layer cake — remember the cake — is applications. And applications only matter if they actually diffuse into the world and help people do their jobs better. Not replace them wholesale, but change the shape of what humans do.

The number of agents and AI-assisted tool users will grow exponentially. The constraint today is not hardware or models — it is the number of human engineers who know how to use the tools. Agents will eventually help here. But Jensen is candid: agents are not good enough yet to use most of the tools they are supposed to use.

The Counterfactual

Dwarkesh ends with a hypothetical: if the deep learning revolution had never happened, what would Nvidia be?

Jensen does not hesitate. Nvidia would still be in accelerated computing. The premise of the company — that Moore's Law alone is insufficient, and that CPUs plus GPUs in parallel can deliver 100x–200x speedups — holds regardless of AI. Fluid dynamics. Molecular dynamics. Quantum chemistry. Structured and unstructured data processing. These are all real workloads that Nvidia serves today, that have nothing to do with AI.

AI is the exciting chapter. It is not the whole story.

Another side note: Jensen was visibly impatient with several of Dwarkesh's questions. At one point he flat-out said the analogies were lousy. He called out "loser premises" and questions that start from extremes. It made for excellent listening. A CEO who is not performing patience is a CEO worth paying attention to.

Source | Dwarkesh Patel — Jensen Huang on Nvidia, AI, and the Future of Computing